XandLLM: High-Performance LLM Inference in Rust with Knowledge Distillation

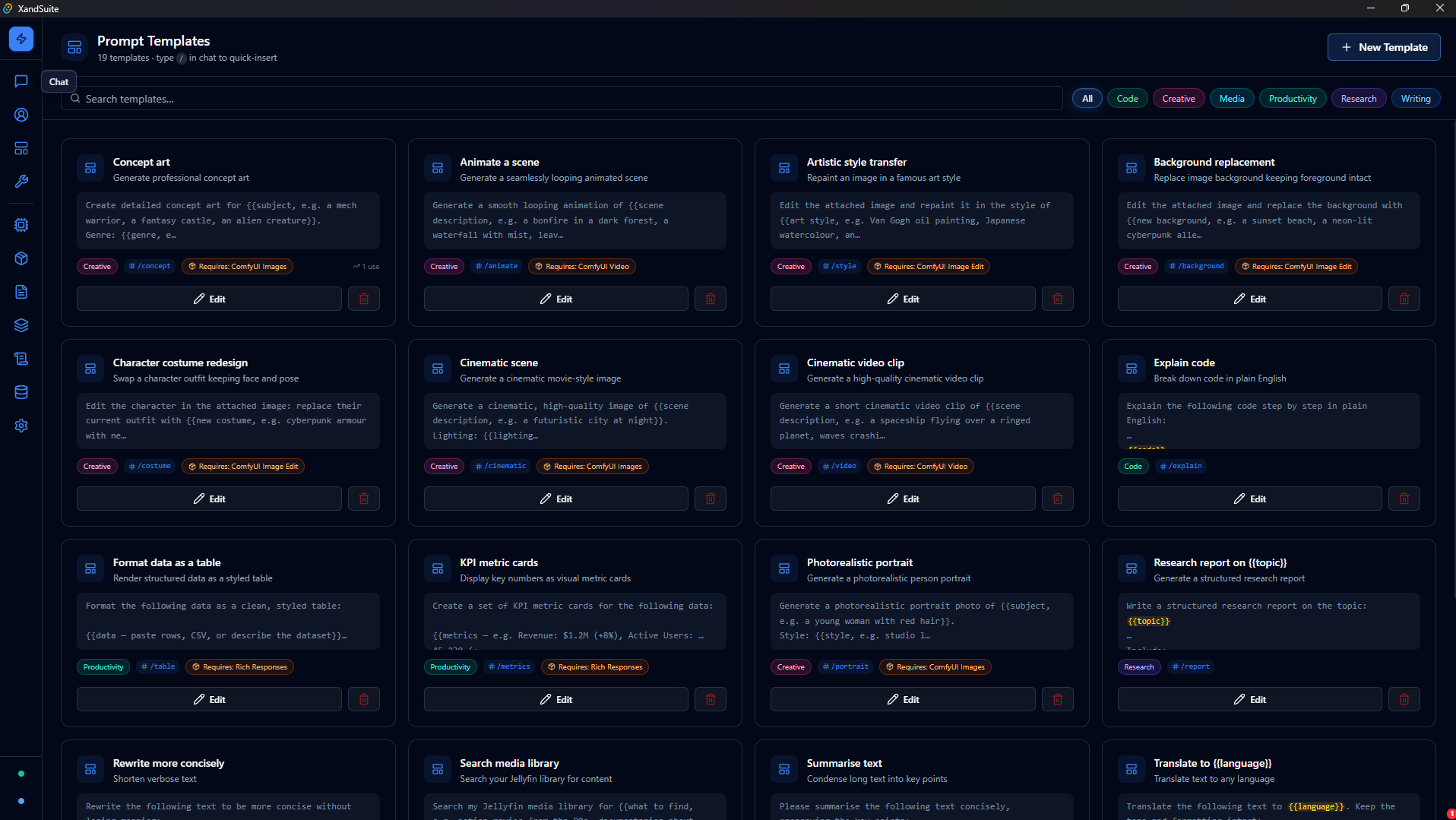

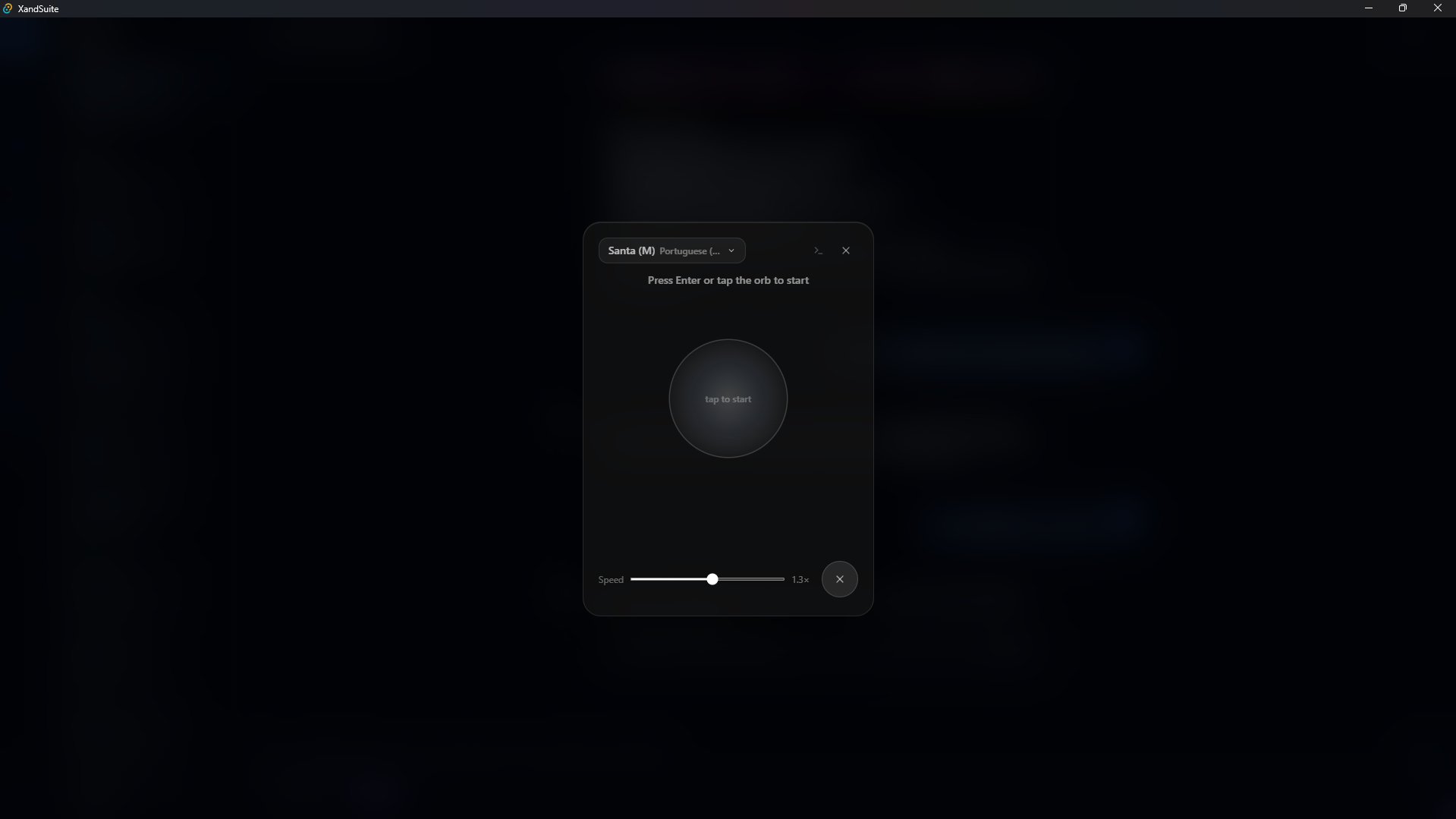

Introducing XandLLM - a production-grade LLM inference engine written in Rust. OpenAI-compatible API, GPU acceleration, and built-in knowledge distillation for creating smaller, faster models.